Abstract

The Bayesian treatment of neural networks dictates that a prior distribution is specified over their weight and bias parameters. This poses a challenge because modern neural networks are characterized by a large number of parameters, and the choice of these priors has an uncontrolled effect on the induced functional prior, which is the distribution of the functions obtained by sampling the parameters from their prior distribution. We argue that this is a hugely limiting aspect of Bayesian deep learning, and this work tackles this limitation in a practical and effective way. Our proposal is to reason in terms of functional priors, which are easier to elicit, and to “tune” the priors of neural network parameters in a way that they reflect such functional priors. Gaussian processes offer a rigorous framework to define prior distributions over functions, and we propose a novel and robust framework to match their prior with the functional prior of neural networks based on the minimization of their Wasserstein distance. We provide vast experimental evidence that coupling these priors with scalable Markov chain Monte Carlo sampling offers systematically large performance improvements over alternative choices of priors and state-of-the-art approximate Bayesian deep learning approaches. We consider this work a considerable step in the direction of making the long-standing challenge of carrying out a fully Bayesian treatment of neural networks, including convolutional neural networks, a concrete possibility.

Key Contributions

Functional priors instead of parameter priors: We argue that specifying priors over neural network parameters is a limiting aspect of Bayesian deep learning, and propose to reason directly in terms of functional priors.

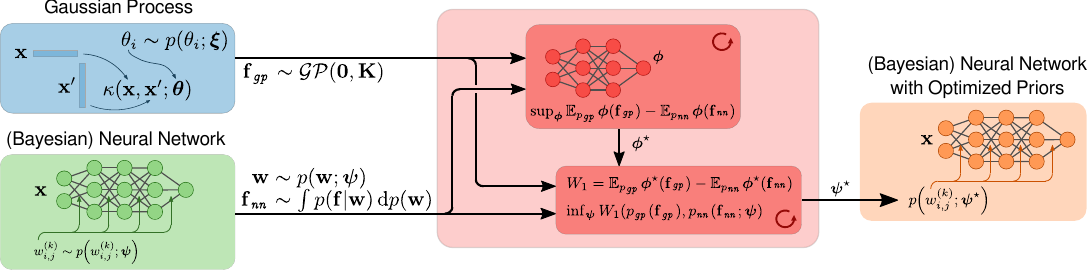

A principled framework for matching priors: We use Gaussian processes to define prior distributions over functions and propose a framework to match their prior with the functional prior of neural networks based on Wasserstein distance minimization.

Robust and practical implementation: We provide a novel and robust framework that can be applied to various neural network architectures, including convolutional neural networks.

Empirical validation: We provide extensive experimental evidence that coupling these functional priors with scalable MCMC sampling offers systematically large performance improvements over alternative choices of priors and state-of-the-art approximate Bayesian deep learning approaches.

Methodology Overview

The core idea is to shift the focus from parameter space to function space. Instead of trying to design priors directly on the weights and biases of neural networks, we:

Specify a functional prior: Choose a prior distribution over functions that encodes our beliefs about the function we want to learn.

Match to neural network priors: Find a prior distribution over neural network parameters that induces a functional prior close to our specification, using Wasserstein distance minimization.

Bayesian inference: Perform Bayesian inference using scalable MCMC sampling methods with the matched priors.

This approach provides better control over the learned functions and leads to more reliable uncertainty estimates.

Main Findings

- Functional priors provide a more intuitive and controllable way to specify prior beliefs in Bayesian neural networks.

- The Wasserstein distance minimization framework offers a principled way to translate functional priors into parameter priors.

- Empirical results show systematic improvements over standard prior choices and approximate Bayesian deep learning methods.

- The approach scales to practical neural network architectures, including convolutional networks.

- This work represents a significant step toward fully Bayesian treatment of neural networks in practice.